Scale-Invariant Programmability and the Continuum of Reality

0. Orientation¶

What kind of world can be known at all? Not just what happens, but what kind of structure makes repeatable facts and reliable prediction possible?

We have two vocabularies for describing change: Physics speaks in forces and fields, while Computation speaks in inputs and outputs. What if they are two coordinate systems for the same underlying process?

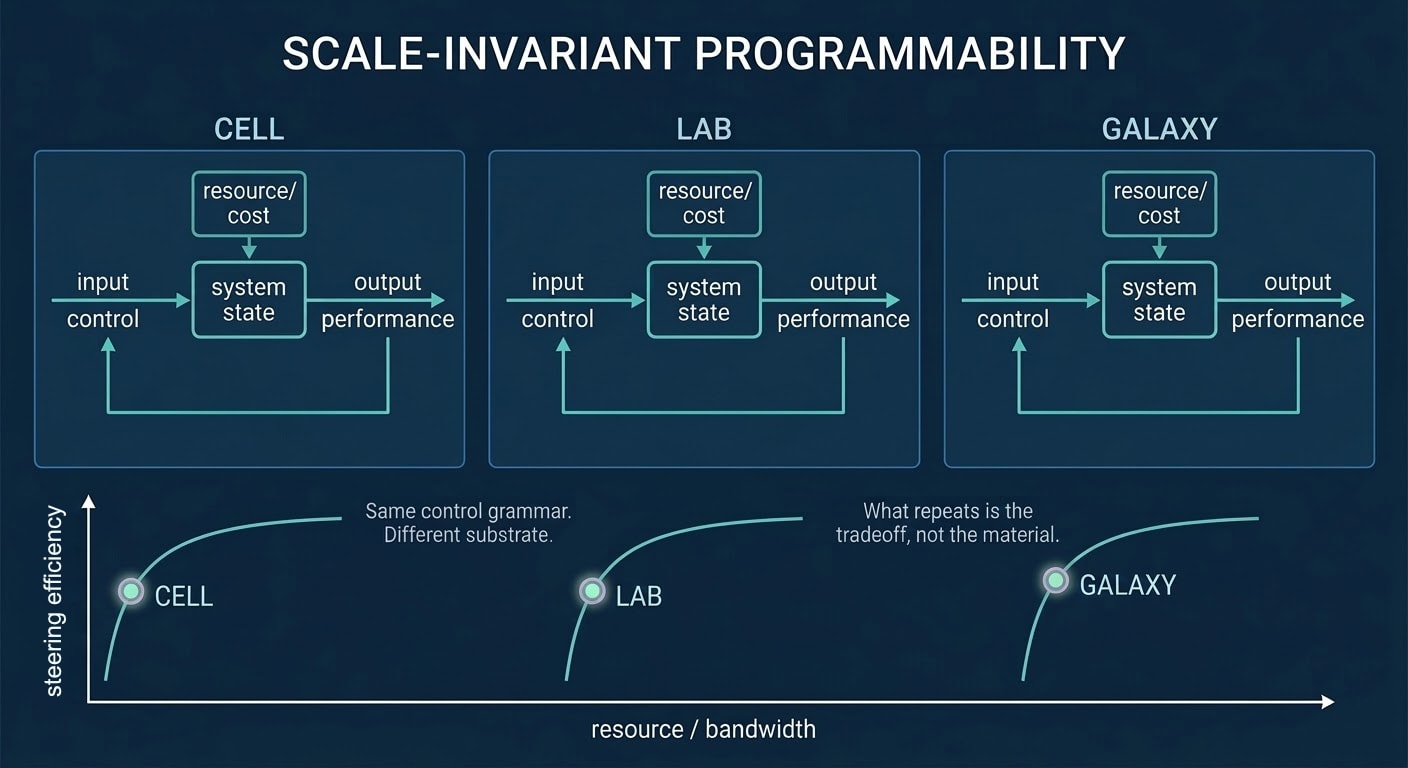

This essay explores the possibility that physical evolution and information processing are two views of the same feedback process. If so, the practical consequence is direct: The same principles that improve a feedback controller should improve a measurement device, or a material, or a model, or a market. We'll make that claim concrete in Section 1 by showing how measurement and control render continuous dynamics into discrete records under bandwidth limits.

Engineers already work with a small vocabulary of feedback moves: damping, amplification, coupling, delay. The bet is that the same motifs recur across domains, so the "bang per buck" of steering has a similar shape across scales. We call this scale-invariant programmability.

Underneath it is retunability: the ability of reality to revise how it unfolds while remaining coherent.

Accept this framing and the map changes. Knowledge becomes participation. Models become instruments. Design becomes resonance. Practically, you stop asking only "what does this predict?" and also ask: "how much steering per joule does this buy, and what bandwidth does it assume?"

Three Layers of Inquiry¶

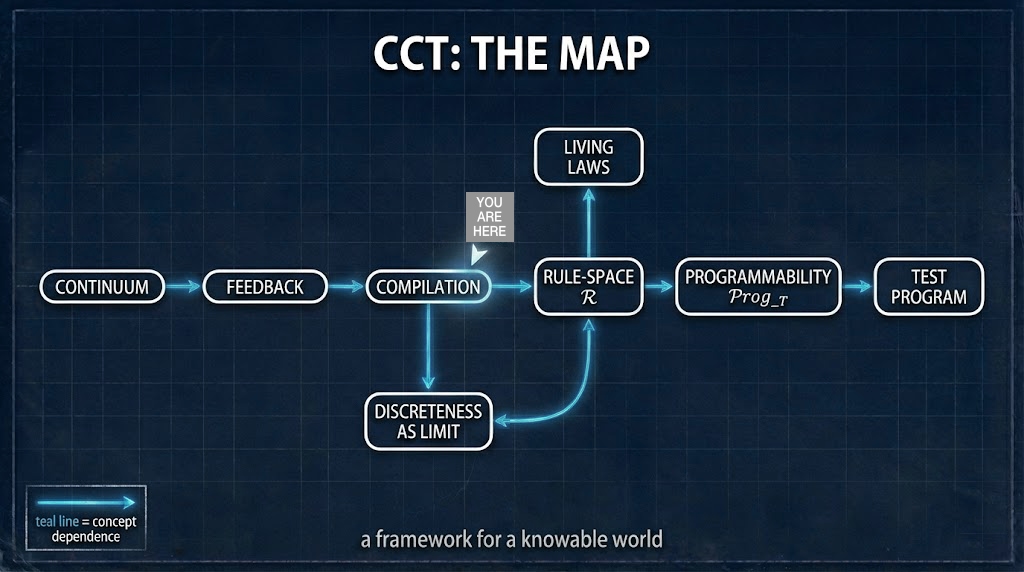

CCT emerged from engineering practice, tuning feedback in electromagnetic and plasma fields. That work kept pointing to the same lesson: physical stability and informational self-organization share one grammar. We present CCT across three epistemic layers:

- Layer 1 (Model Theorems): rigorous results for finite-state, capacity-limited observers near equilibrium.

- Layer 2 (Engineering Regime): design constraints and scaling laws for lab-scale controllers that approximately satisfy Layer 1 assumptions, so that claims can be stated in a constraint-complete form rather than in idealized terms.

- Layer 3 (Meta-Law Conjecture): the speculative possibility that any physically realizable observer falls into a bandwidth-limited class and obeys analogues of these bounds, and that apparent physical laws are emergent stability equilibria rather than fixed axioms.

Across the layers, the point is not to add machinery but to discipline interpretation: what CCT asserts should remain stable under stated limits of observation and intervention. This essay develops Layer 3 while staying anchored to Layers 1 and 2.

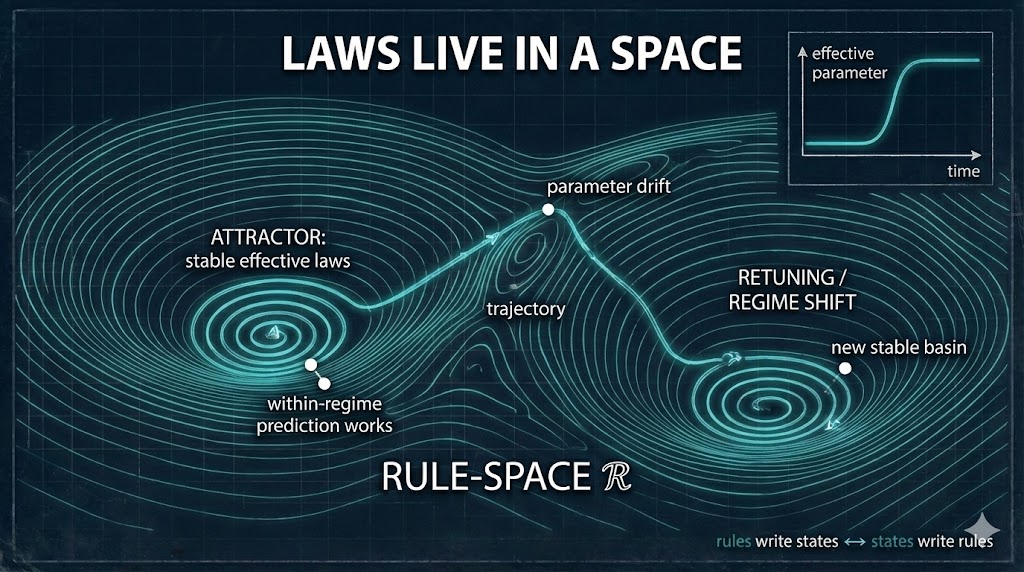

It treats GR, QM, and the Standard Model as stable effective regimes (attractors in rule-space) and asks what operational conditions—bandwidth limits, feedback structure, and control resources—stabilize them. Their empirical success becomes one of the first things Layer 3 must explain: why familiar relativistic, quantum, and field-theoretic descriptions remain so stable under the observer/controller regimes tested so far, and what kind of controlled regime boundary would be needed before any departure could count. The method stays operational even at the speculative edge.

Layer 3 remains conjectural, but it is no longer untethered: the current simulation program has identified concrete observables—observer-slider transitions and propagation-residual tests—that make its interpretive questions live, constraint-complete, and in principle falsifiable.

Geometric picture (optional)¶

Some readers may prefer a geometric rendering of the same idea. Retuning means sweeping control settings; calibration means specifying how the “same” inferred quantity is identified as those settings change. In this picture, control settings form the base, effective descriptions vary over them, a consistent choice across settings is a section, and the calibration rule supplies a transport law (a “connection” in the operational sense) for comparing nearby inferences. Finite bandwidth limits how sharply that transport can be estimated, and path dependence under loops provides an operational notion of drift. This language is optional: it adds no ontology beyond the declared controls and estimators, and simply clarifies when retuning is re-description and when it signals regime change.

Core Conjecture¶

At its most speculative layer, CCT treats GR, QM, and the Standard Model as emergent effective regimes—stable local attractors in a larger rule-space. That is an organizing conjecture, not a derivation claimed here. The first burden is to account for why their predictions form exceptionally stable effective regimes while defining what would count as a genuine departure. On this view, physical "constants" including ℏ, c, and G behave like feedback-stabilized parameters inside those attractors, not primitive decrees.

We state this conjecture and focus on its operational consequences: Phase 1–2 establishes calibration and control metrics within current physics; Phase 3+ defines drift/deviation tests that could detect emergent-law behavior if it exists. Phase 1–2 success belongs to the engineering layer; Layer 3 requires additional evidence that survives stricter deviation tests.

This framework is meant to be falsifiable and to prevent epicycles. The validation roadmap appears in Section 5.

Key terms

- Rule-space (\(\mathcal{R}\)): is the space of effective parameters and constraints that characterize a regime — what we ordinarily package as its “laws” — and the limited ways feedback can shift them.

- RFH (Resolution Filter Hypothesis): apparent discreteness decreases as measurement bandwidth \(B\) (information throughput) increases, often with a scaling exponent \(\alpha\).

- Bandwidth (\(B\)): the rate at which an observer extracts task-relevant information from a process (e.g., Fisher information per second or a proxy).

- Programmability (\(\mathsf{Prog}_T\)): steering capacity per resource, measured as reliable causal control bits per joule over horizon \(T\).

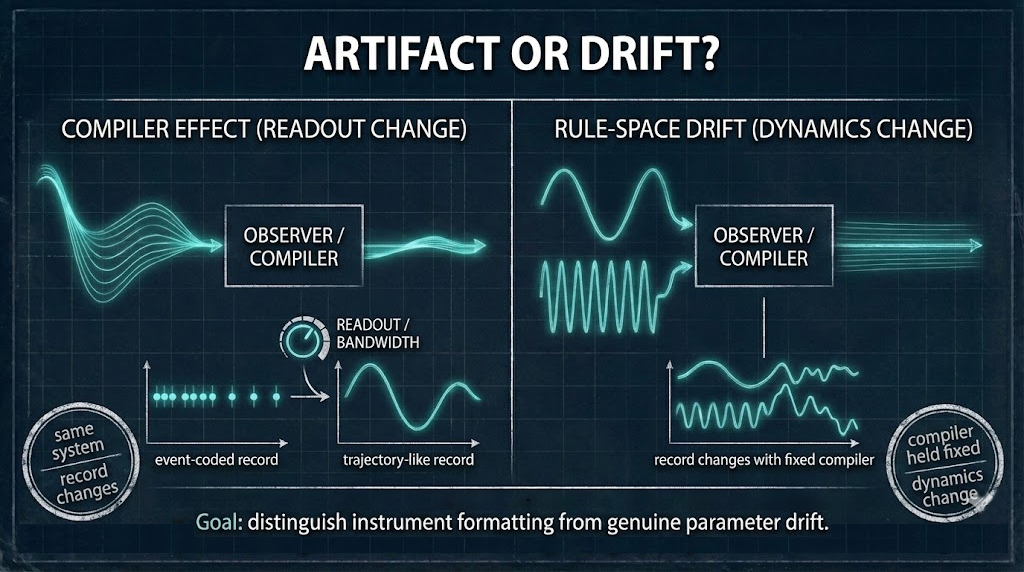

- Compiler / compilation: any finite-bandwidth observer or controller that renders continuous dynamics into discrete records (clicks, bits, particles).

- Retunability: the ability of a system to revise effective parameters under feedback while remaining coherent.

1. The Mirage of the Digital–Physical Split¶

We have long spoken of a “digital world” and a “physical world,” as if computation and material reality occupied separate realms. Yet this separation is not written into nature. It is largely an artifact of how we look: a by-product of how we measure, model, and mediate.

1.1 Instruments as Compilers¶

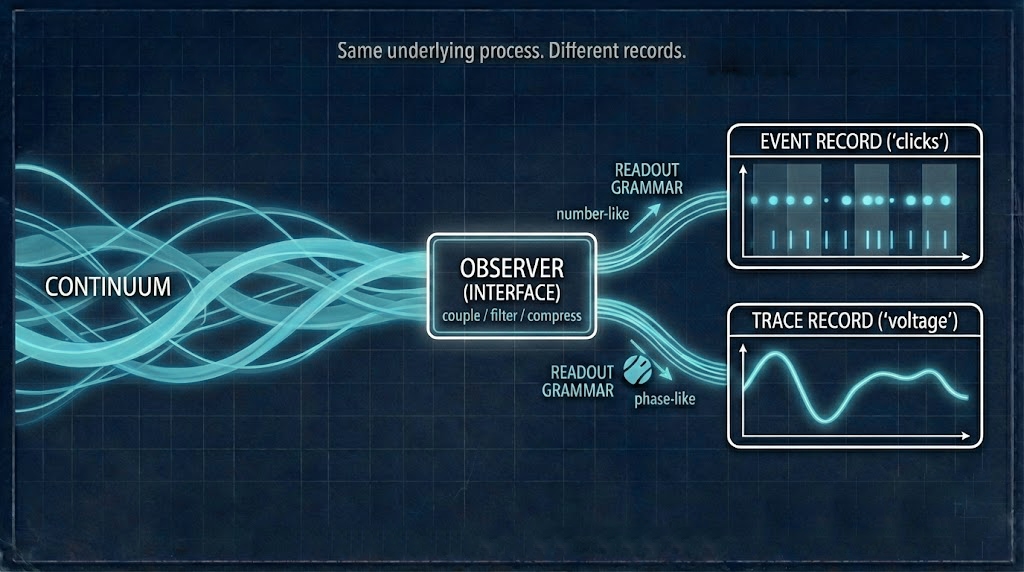

Instruments—whether telescopes, microscopes, or code interpreters—do not reveal independent domains; they compile the same continuous reality into distinct representational languages, producing what appear to be different ontologies: the quantum, the cosmic, the computational.

Think of each instrument as a compiler with limits:

- It exposes only a slice of the continuum.

- It enforces a particular grammar (pixels, counts, bits, fields).

- It trades off resolution, range, and noise in order to produce stable reports.

Finite channel capacity and measurement back-action (the act of measuring disturbs what is measured) shape observed discreteness. Widen the channel, and discreteness softens. Apparent discontinuities across scales often arise from these limits, not from the fabric of reality itself.

A helpful image: the ocean is not “blue” in itself; “blue” is what scattering looks like to our eyes. Names stabilize appearances; processes live beneath them.

1.2 Discreteness as Finite-Bandwidth Projection¶

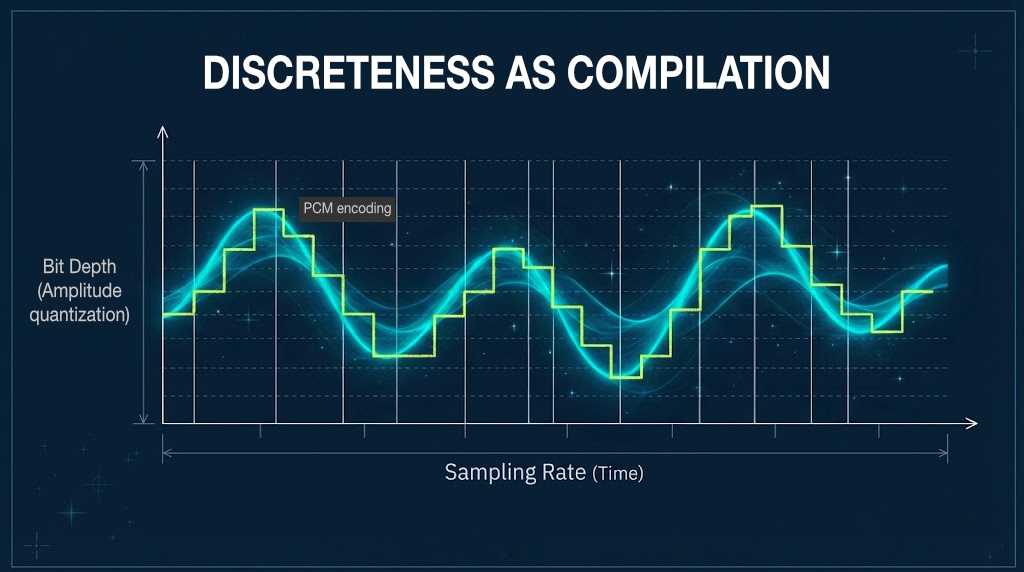

Consider a familiar example: digital audio.

- A microphone rides a continuous pressure wave.

- An analog-to-digital converter samples it at fixed intervals and snaps amplitudes to a finite set of levels.

- As resolution increases, the steps blur into smooth sound, even though the underlying signal never stopped being continuous.

Change the converter and the “world” of the recording changes with it.

The same pattern holds broadly:

- A telescope widens one slice of bandwidth and reality appears continuous.

- A particle counter constricts another and reality clicks.

- Code interpreters in silicon compile flux (voltages, charges) into legible grammar (bits, tokens).

Swap the compiler, change the grammar; the “world” shifts accordingly.

Discrete outcomes, then, are not nature switching modes but stable outcomes of interaction: moments where a continuous process meets a finite channel and stabilizes into a report. Widen the channel and the pixels soften toward film; narrow it and film hardens into bits and particles. The error was not seeing difference but mistaking it for division: treating our renderings as realms.

1.3 From Mirage to Mechanism¶

Once the split is recognized as an artifact of compilation, the question shifts from: Which world is real? to: How do feedback, bandwidth, and the space of possible rules (“rule-space”) co-produce what appears?

If instruments translate continuous reality into discrete reports, then computation is not something that happens after the world appears; it is how the world appears at all. The act of observing is already a kind of computation. The "digital" and the "physical" are two dialects of the same recursive act of translation.

This moves us from mirage to mechanism:

- Discreteness is treated as projection.

- Bandwidth and feedback become central physical quantities.

- “Digital vs. physical” becomes a design choice, not a metaphysical boundary.

1.4 Why Now?¶

Because several pressures are converging: instrument bandwidth is exploding; networked computation externalizes feedback at planetary scale; and across domains (from morphogenesis to markets), the same motifs recur: scaling laws, criticality (systems poised at the edge of instability), fractal structure.

The old split can no longer organize practice. The next paradigm can treat every tool as a translator, and every discipline as feedback design.

Practical stakes. The long‑horizon motivation is to widen humanity's option space and reduce extinction risk by giving us more concrete, testable levers over high‑stakes dynamics. But any new framework will almost certainly deliver its first dividends in new measurement protocols, control strategies, or cross‑domain insights.

1.5 Shift Map¶

This reframing can be summarized as a set of conceptual transitions:

- From states to transformations.

- From particles to processes.

- From measurement as mirror to measurement as participation.

- From control over external objects to co-tuning with responsive systems in feedback ecologies.

- From reality as discrete by nature to discreteness as compilation: continuity rendered into the finite grammar our instruments can parse.

The rest of the essay unpacks what becomes visible once these shifts are taken seriously.

1.6 What This Isn't¶

CCT is not pancomputationalism ("everything is a digital computer"), and it is not digital‑physics ("bits are fundamental"). Computation here means continuous, rule‑based evolution, with bits/particles as finite‑bandwidth projections. And while it's adjacent to relational/QBist/decoherence intuitions about measurement, its distinctive move is to treat bandwidth and feedback depth as physical variables that can be swept and falsified.

2. The Continuum: A Rule-Based Dynamical Substrate¶

If the digital–physical split is an artifact of how we look, what does the world look like when we stop taking that split as fundamental?

2.1 What “Continuum” Means Here¶

Here, “continuum” does not mean a hidden substance or ether. It means that the underlying dynamics are treated as continuous, while discrete records arise through finite-bandwidth projection and readout.

The continuum is unbroken coherence across relations; discrete reports are what we get when finite instruments sample it.

To call it "continuous" is to say that no event stands alone and every change reverberates through a web of relations. Ontologically, the continuum is the condition that makes interaction possible. Operationally, it appears as a connected state-space with measurable dynamics, within which discrete observations arise as projections.

Put simply: the continuum is what's there (the coherence of reality), and our discrete descriptions are how we access it.

2.2 Computation Beyond the Turing Cage¶

To see computing as a fundamental mode of being rather than a technological artifact requires releasing it from its Turing cage.

We have the standard view:

- Turing computation: discrete, symbolic, stepwise, defined over strings of symbols and explicit rules.

- Implemented in silicon as clocked, digital state machines.

And the broader view:

- Dynamical computation: continuous, recursive, embodied in any medium that evolves according to rules.

- Implemented in fluids, fields, morphogenic tissues, ecosystems, markets.

In this broader sense, the universe can be understood as computing, not by executing stored programs, but by enacting its own dynamical laws.

Examples of computation without code include field dynamics in fluids, pattern formation in morphogenesis, and resonance cascades in nonlinear systems. In bioelectric morphogenesis, for example, tissue-level voltage patterns act as distributed memories and goals that shape anatomy (computation embodied in living tissue rather than silicon).

What we call “programs” in digital machines are special cases of this broader capacity for rule-based evolution. To compute, then, is simply: To evolve within a structured space of relations.

The “program” of the cosmos is not written anywhere; it is the living field of differential relations that generate structure, form, and novelty. Matter, energy, and code are not separate substances: they are complementary descriptions of the same recursive process.

2.3 The Continuum Computation Thesis (CCT)¶

From these observations arises a unifying claim of CCT:

Reality can be described as a rule-based dynamical continuum exhibiting scale-invariant programmability, where physical evolution and information processing appear as two projections of the same recursive feedback substrate. In this view, discreteness (bits, particles, measurements) behaves as an epistemic projection of finite channels (see §1.2), not an ontological primitive.

In other words, we model the universe as a physical process that continuously transforms information from one moment to the next, and we treat that transformation like a computation with measurable bandwidth and energy costs.

CCT positions computation not as an analogy to physics, but as its self-description. On this view, it is less that the universe computes, and more that: Computation is what the universe is doing when it exists.

Being and processing become indistinguishable: existence is the universe's ongoing self-transformation. If reality is continuous relation rather than isolated things, the living question becomes: How do those relations retune themselves?

This is the heart of scale-invariant programmability.

3. Information & Feedback: How the Continuum Coheres¶

Given a continuous, rule-based dynamical substrate, how does coherence arise? Why does anything persist?

3.1 Information as Relational and Constitutive¶

Here, information is treated not merely as descriptive but as constitutive of stable physical organization: it helps shape what persists, not just how we describe it.

- Every physical transformation is also an informational transformation.

- Changes in configuration correspond to changes in uncertainty and constraint.

Landauer’s principle—the thermodynamic cost of erasing a bit—reveals a deep symmetry between energy and information: you cannot fundamentally “forget” information without paying an energetic price. Thus: Matter and energy are not just ‘carriers’ of information. They can be usefully modeled as information-in-motion, seen under different projections.

Information is best understood as relational structure: patterns of constraint that stabilize over time.

3.2 Feedback as the Grammar of Being¶

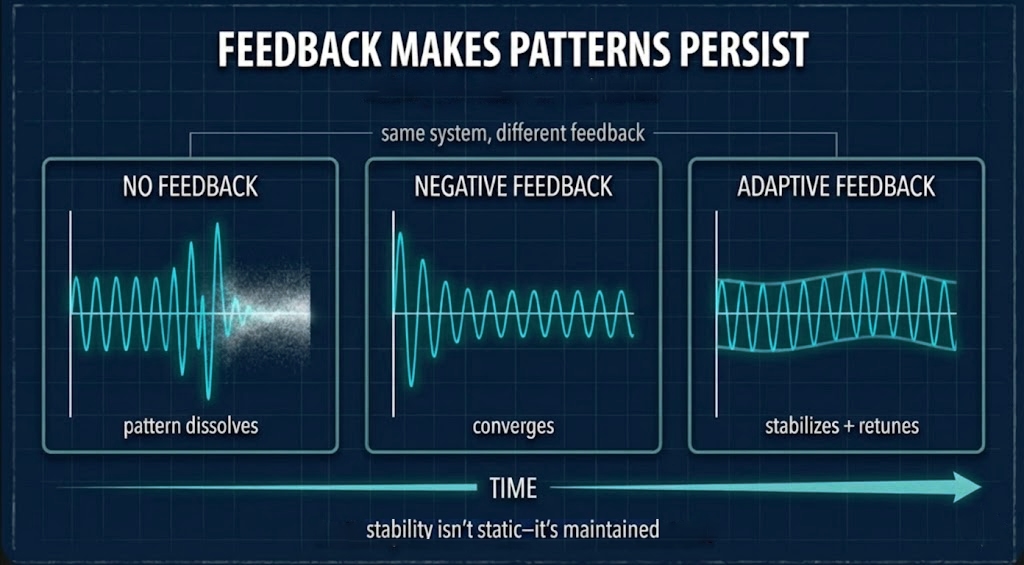

Schematic: arrows trace influence; closed loops indicate feedback that can stabilize patterns into structure.Feedback is how information becomes structure.

We use “feedback grammar” as a heuristic label for recurrent organizational motifs—damping, amplification, coupling, delay, criticality, and cross-scale constraint propagation—not yet as a standalone formal object.

- A system that takes in signals and retunes its behavior is a feedback system.

- Nested feedback loops across timescales enable self-reference and self-maintenance.

Across domains (turbulence, neural activity, ecosystems, even black-hole accretion), recurring signatures appear: Power-law scaling, Self-organized criticality (systems that tune themselves to the edge of instability), Fractal symmetry.

These can be read as the grammar of feedback in action: stable patterns emerging at the edge of instability, where systems are maximally capable of transmitting and transforming information without losing coherence.

Information, then, “behaves as if real” because the operations of matter are informationally coherent. On this view, computing is not merely humanity mimicking nature but a compact description of how nature stabilizes and reuses structure through recursion.

3.3 Energy–Information & Patterns¶

From a thermodynamic standpoint:

- Energy flows from regions of high potential to low potential.

- Information flow can be seen as the reduction of uncertainty about those flows.

Variational free-energy principles (used in neuroscience and nonequilibrium thermodynamics) express this symmetry: systems that persist tend to minimize expected surprise under constraints; roughly, they act to keep their states within a manageable range, systematically suppressing incoherent configurations.

Formally: Energy flow constrains the paths a system can take. Informational feedback tunes which paths are stable. The coherence of change (patterns that last) is what we call “structure,” “forms,” “laws,” or “agents,” depending on scale.

Why do power laws, criticality, and fractal structure keep showing up?

Because they are:

- Efficient ways of spreading influence across scales.

- Stable outcomes of feedback dynamics.

These phenomena can be read as signatures of how rule-space is organized:

- Rules that couple scales give rise to fractal-like patterns.

- Rules that balance instability and damping generate criticality.

- Rules that preserve invariants across transformations yield scaling laws.

In other words, these “mysterious” empirical regularities can be read as statistics of the continuum’s feedback grammar.

4. Programmability: Scale-Invariant Steering¶

With the continuum and feedback in place, we can ask: How much can reality retune itself?

4.1 Programmability: A Working Definition¶

Programmability is a system’s capacity to tune, modulate, and reconfigure its own generative parameters.

Operationally, we can define it as: reliable steering per unit energy/time; the ability to retune generative parameters, measurable as control efficiency or reduction in predictive error per control budget.

Programmability measures how effectively a system can use its bandwidth and feedback structure to steer itself or be steered.

4.2 Why Programmability Appears Scale-Invariant¶

The key claim is that programmability is scale-invariant in a structural sense.

At every level of organization, systems write and rewrite parts of their own operating conditions:

- Molecular: conformational switching; regulatory networks retuning expression.

- Cellular/tissue: signaling feedback; morphogen fields steering morphogenesis.

- Organism: neural plasticity reshaping behavior; immune systems updating recognition.

- Social/technical: institutions updating norms; machine learning models continuously updated, and protocols are refactored to meet evolving demands.

Across scales, the same shape repeats: feedback modifies the parameters that generate behavior, with constraints acting on constraints.

Same feedback grammar, different substrate; programmability measures steering per resource.Scale invariance here does not mean that \(\mathsf{Prog}_T\) takes the same value at every scale. It means that across scales, the same class of resource tradeoffs recurs: reliable causal steering remains bounded by bandwidth, energy, noise, and back-action even when substrate and implementation differ.

A useful mental model is a two‑ring picture:

- Inner ring: treat laws as fixed; vary states and controls (standard physics and engineering).

- Outer ring: effective laws/parameters can shift under sufficiently deep feedback.

But the outer ring is not magic; rule changes are themselves resource‑bounded. You can change the game, but you still have to pay for the new board.

4.3 Plateaus of Control Efficiency: A Testable Hint¶

Empirical hint: Plateaus of control efficiency across resolutions may signal scale-invariant regimes.

Imagine:

- You attempt to control a system at different levels of coarse-graining (e.g., molecular, cellular, organ-level).

- You measure how much control (error reduction, goal attainment) you gain per unit control energy at each resolution.

You might find:

- Regions where control efficiency changes dramatically (indicating stiff or brittle regimes).

- Regions where control efficiency remains relatively stable across scales (indicating programmable bands).

These plateaus would mark scales where the same feedback grammar is effectively in play, even if the microscopic details differ.

4.4 Grounding Programmability¶

These programmability principles are not armchair speculation. They are tested through simulations and purpose-built experimental platforms. CCT Labs is developing photonic and material systems to probe how coherence and control efficiency scale under different conditions. Early simulation results show that structured, resonant driving can achieve greater control per unit energy than brute-force approaches (modest gains, but measurable and reproducible). Hardware validation is next. In observer-design studies, the most robust gains are prefactor and knee shifts; exponent changes require explicit resource/correlation changes.

4.5 Programmability as Agency¶

Within this ontology, agency is not an all-or-nothing property. It is graded by programmability:

- Systems with shallow feedback and low programmability behave like passive media.

- Systems with deep feedback and high programmability can retune their own rule-space.

As feedback deepens, systems grow self-referential: they not only evolve but adjust how they evolve.

Within CCT, programmability names a form of lawful creativity: the resource-bounded retuning of effective rules across scales.

5. How We Test This¶

CCT is structured for empirical accountability across two phases:

- Phase 1–2 (Calibration): validate CCT metrics (bandwidth, discreteness, programmability) within current physics. Reproduce established results or fail. Control for measurement-mode confounders such as counting versus phase readout, and binning or threshold rules that can shift apparent discreteness without new physics.

- Phase 3+ (Deviation Detection): probe for rule-space transitions where effective constants deviate from standard values. If anomalous bandwidth–discreteness exponents, Δx·Δp ≠ ℏ signatures, or light-cone deformations survive strict nulls, independent replication, and known-systematic ledgers in the right regimes, they would open a Layer 3 evidence review for the Core Conjecture.

This sequencing prevents epicycles. Phase 1–2 establishes the baseline needed to interpret deviations. The scientific companion details the experimental program. CCT Labs (engineering program) specifies platform-level protocols.

CCT as a controllability criterion. A CCT claim is upgraded when it implies not only that an effect can be observed, but that it can be steered reproducibly under declared constraints: finite actuation response (delay/bandwidth), finite-shot estimation, and regime drift/noise. Those constraints are not "implementation details" to be ignored; they are part of the physical coupling between an observer-controller and the process it probes. This is how CCT turns observer-dependence into an engineering program with stop rules.

Practically: we treat two physical constraints (finite actuation response and drift/noise) and two engineering levers (waveform-shaped control and robust estimation/calibration) as part of the claim, not as after-the-fact excuses.

The claims developed here, that programmability is scale-invariant, that discreteness is projection, and that laws are adaptive feedback habits, belong to Layer 3. Treat them as working hypotheses motivated by Layers 1 and 2, not as derived consequences. Known physics becomes a stability target: CCT must explain why the familiar effective descriptions are so hard to dislodge before it can responsibly claim that any boundary has been crossed.

Early probes across several regimes suggest RFH-style bandwidth–discreteness scaling, with exponents that vary by system. The preprint reports the measurements and controls.

6. Determinism and Novelty: Law in a Living Rule-Space¶

If reality computes through recursive feedback, the question is not whether it is lawful, but how law remains open to novelty.

6.1 Deterministic Operation, Evolving Rule-Space¶

Within a given rule-space, evolution proceeds lawfully: feedback relations unfold according to consistent dynamics. Yet the rule-space itself can evolve as boundaries, symmetries, and effective laws are retuned by higher-level feedback.

Thus the universe can be understood as deterministically emergent (deterministic in operation, creative in unfolding). Lawfulness and novelty coexist: each feedback cycle stabilizes one configuration, and that configuration alters the conditions for the next. Rules write states; states write rules.

6.2 Two Readings¶

Two philosophical interpretations coexist. The first embraces full determinism with effective unpredictability: micro-dynamics are fully deterministic, but finite bandwidth, chaos, and coarse-graining make long-term prediction effectively impossible. In this view, observed novelty is "apparent": a product of our epistemic limits, but not baked into reality itself. The second accepts fundamentally stochastic micro-dynamics with a deterministic law-of-laws: micro-events involve genuine randomness, yet the evolution of rule-space itself (the space of constraints and symmetries) is nonetheless structured and law-like. Here, randomness serves as substrate while order emerges as invariant.

The default stance in this essay threads between them: Deterministic within a living rule-space that can itself evolve; novelty arises as feedback retunes the rules that govern evolution. This allows us to preserve lawfulness without freezing the laws themselves into immutable axioms.

6.3 Rule-Space Change Is Not Arbitrary¶

What counts as a change in rule-space? It can manifest as shifts in which variables are effectively coupled, changes in relevant timescales (what registers as "fast" versus "slow"), the emergence of new invariants or conserved quantities, or the reorganization of symmetry groups through spontaneous symmetry breaking (when a symmetric system settles into an asymmetric state).

Consider renormalization flows in quantum field theory: as you zoom in or out (change energy scale), the effective strength of interactions changes; yet this change itself follows a structured pattern (the "beta function" describes the rate of change). The couplings aren't fixed, but neither are they arbitrary; they flow along predictable trajectories. In biological evolution, the fitness landscape changes as organisms reshape their environment, not merely by adapting to a fixed backdrop but by creating new niches and selection pressures.

In each case, rule-space evolution is constrained and structured, not whimsical. Novelty is generated under pressure from the existing feedback ecology, not conjured from nothing.

6.4 Creativity Without Breaking Laws¶

This dual stance allows:

- Laws that are stable enough to be discoverable and usable.

- Rule-space dynamics that are flexible enough to generate new forms.

Creativity does not require breaking laws. It requires:

- Laws acting on laws.

- Constraints that can be reconfigured while preserving overall coherence.

No agent can steer reality faster than they can resolve it. (We prove a version of this in our formal "baby theorems"; see the Scientific Companion.)

Programmability is the name CCT gives to this possibility: nature changing its mind without breaking its laws.

7. Observation as Participation¶

No observer stands outside this process. Every act of measurement is a form of participation: a channel’s way of compiling continuous change into something it can read. To observe is to enter the feedback loop, not to stand apart from it.

7.1 Measurement as Compilation¶

Call the limit bandwidth:

How much change a channel can carry per breath of energy.

A detector is not a neutral window; it is:

- A selection mechanism for which aspects of the continuum will be rendered as discrete outcomes.

- A control interface that perturbs the system it measures.

Interference speaks the continuum; detection speaks our limits.

7.2 Collapse as Compilation¶

Think of the double-slit experiment:

- Before detection, you have a continuous interference field, with amplitudes distributed over possible paths.

- At detection, a finite channel (detector + readout + recording apparatus) compiles a click.

Here, collapse is interpreted as compilation: a finite measurement chain projects continuous state evolution into a discrete, architecture-dependent record.

This is a claim about the interface:

- The wave-like dynamics are continuous evolutions in rule-space.

- Particle-like clicks are treated here as stable records produced by the interaction between continuous dynamics and a bandwidth-limited compiler.

Prediction (sketch):

As \(B\) increases and the readout shifts from number-like to quadrature-like, the record should interpolate from click-like events toward continuous trajectories, without implying energy quantization vanishes. Testable through bandwidth-expansion experiments, where you increase dynamic range, integration time, or detection dimensionality and track how sharp "clicks" become extended patterns. In some regimes this softening appears as smooth power-law scaling of discreteness with bandwidth; in others (for example horizon analogs and resonant cavities) it manifests as band structure and transitions rather than a single slope. Both are read, in this ontology, as different compiler grammars through which finite channels project the same underlying continuum. The main discriminator is curve-shape change (knees/band transitions), not a universal slope requirement.

7.3 Comparison to Interpretations of Quantum Theory¶

This stance rhymes with QBism (which treats quantum states as encoding an agent's expectations about future experiences), relational QM (which treats properties as relative to observers), and decoherence (environment-induced emergence of classical behavior), but differs in what it treats as primary. Here the focus is on compiler bandwidth and control granularity as physically salient quantities, and collapse/measurement is treated as a specific kind of projection operation enacted by finite channels, not a fundamental axiom. The move preserves the successful measurement formalism while shifting the ontology toward continuous rule-based dynamics, with discreteness local to interactions.

7.4 Participatory Knowledge¶

Knowledge, in this frame, is not a mirror of the world but a phase of its recursion.

- To measure is to reshape rule-space (by conditioning, by control, by creating new stable couplings).

- To model is to create new feedback paths (simulation, prediction, intervention).

Observer bandwidth becomes part of the phenomenon, not external to it. Scientific practice becomes:

The design of compilers that expose and steer specific aspects of the continuum’s feedback grammar.

8. Time and Constants: Emergent Coherences¶

Two familiar pieces of physics (time and constants) look different in this light.

8.1 Time as Recursion¶

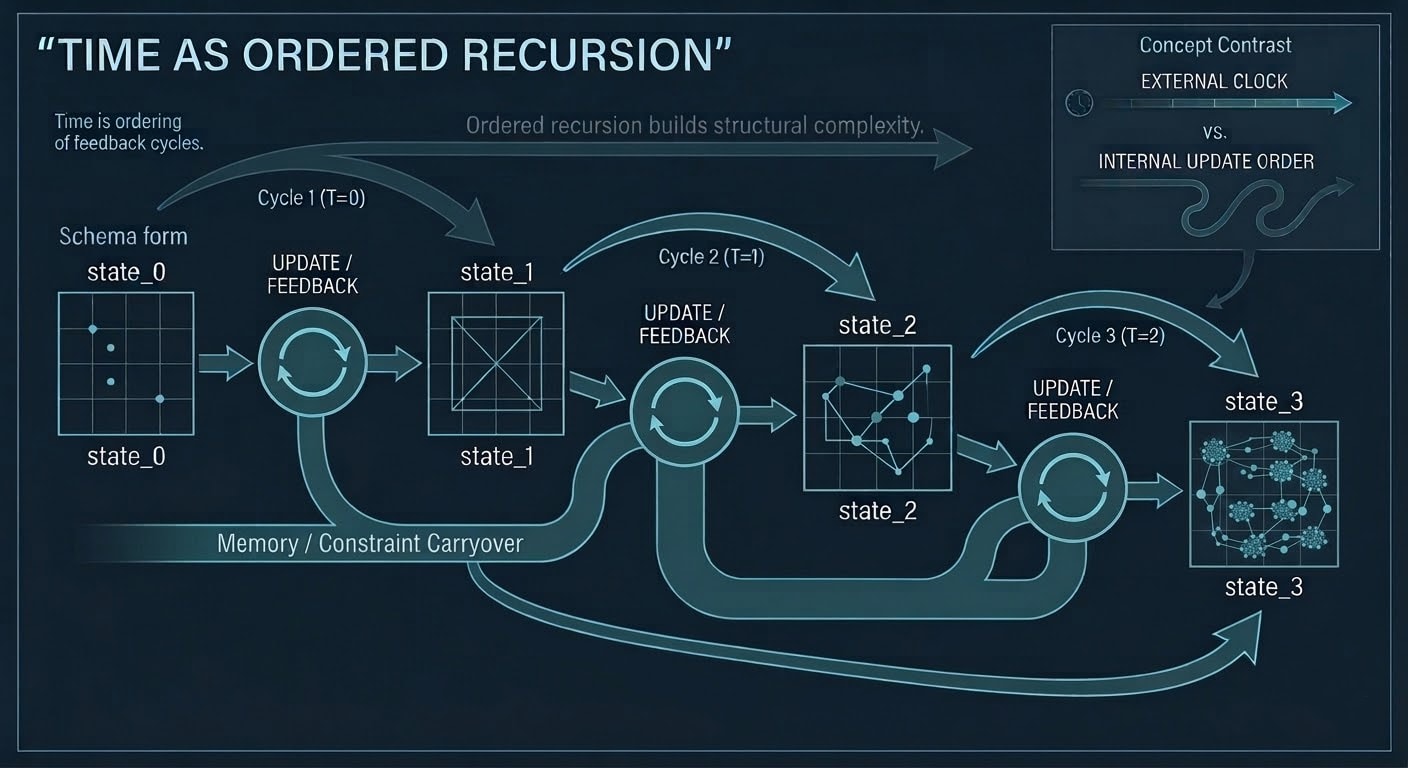

In this ontology, time is not an external coordinate against which events unfold. It is:

The ordering of feedback cycles: the rhythm through which the continuum stabilizes novelty into record.

Schematic (not a literal timeline): time as the ordering of feedback cycles.Each recursive loop constitutes a unit of temporal articulation: the way transformation measures itself.

- The arrow of time arises from asymmetry in informational flow: each update integrates the past into a predictive structure while the future remains open as potential feedback.

- Time is the direction of computation: the universe’s own iterative syntax of becoming.

From the continuum’s vantage, time is recursion perceived locally. What we call “the present” is coherence maintained across loops.

The present is continuity experienced as now.

8.2 Constants as Self-Tunings¶

The constants of nature ($ c, \hbar, G $) are less immutable decrees than:

Self-tuned equilibria: settings that preserve coherence across scales.

The Core Conjecture interprets them as:

- ℏ: a back‑action coupling (a regime parameter). ℏ sets the scale of quantization/error that appears when a finite‑bandwidth observer compiles continuous dynamics into discrete records. This is the “outer ring” possibility: if the coupling is ever retunable, it should show up as reproducible anomalies (modified uncertainty products or shifted bandwidth–discreteness curves) that survive measurement‑mode controls (Phase 3+).

- c: understood as a bandwidth ceiling. Here it marks the maximum rate at which coherent information propagates through a local rule-space configuration. Light‑cone structure can then be treated as the geometry of that ceiling, rather than only as an externally given axiom.

- G: understood as a coupling between geometry and energy–information flow. Here spacetime curvature can be modeled as a downstream consequence of how rule-space organizes coherence under energy constraints, rather than only as an independent primitive.

Technical note (formal layer): In the Model‑Theorem layer (Baby Theorem 8), ℏ appears as an effective back‑action coupling for finite‑bandwidth observers; in that regime the uncertainty product can be read as a quantization error with characteristic exponent $ \alpha \approx 0.5 $. The Scientific Companion spells out assumptions and controls.

Renormalization in quantum field theory already hints at this: couplings “run” with scale yet flow toward fixed points. CCT takes that hint seriously and reads "constants" as attractors in rule-space: values the feedback ecology returns to because they stabilize coherence and communicability across regimes.

That claim sits squarely in Layer 3 (Meta‑Law Conjecture): a claim about how reality may organize its own coherence, not about what current measurements already force us to accept. The validation program (§5) is designed to probe for deviations (signatures of rule-space transitions where these "constants" cease to behave as fixed). Absence of such signatures after sustained probing would constrain the conjecture; presence would begin to confirm it.

If drift or regime-dependence were ever observed in these parameters, it would register as:

- Context-dependent effective parameters, not broken law.

- Adaptability expressed as stable retuning within a deeper rule-space ecology.

Crucially, the programmability principles developed here (bandwidth-limited control, feedback coherence, scale-invariant steering) apply regardless of whether constants prove regime-dependent. Phase 1-2 validation (materials, effective metrics) delivers revolutionary control capabilities whether or not Phase 3 reveals fundamental constant deviations.

8.3 Stability as Learned Coherence¶

Here, physics shifts its emphasis from seeking fundamental particles to exploring:

- Rule-symmetries and

- Adaptive feedback laws.

Constants, methods, and models all become:

Stability strategies within a single feedback ecology:

physics as one branch of a larger adaptive monism.

9. Toward an Adaptive Monism¶

What emerges is a post-digital monism: an adaptive understanding that there appears to be no fundamental break between:

- Silicon and star,

- Algorithm and atom.

Both seem to instantiate the same self-similar principles of transformation and feedback. The digital can be understood as:

The physical rendered legible in our chosen syntax.

This monism is dynamic, not static:

- It evolves as the universe continues to refine its own syntax.

When we code, simulate, or network, we do not create an artificial world beside the real one. We:

- Participate in the real world’s own act of computation.

- Contribute additional layers of feedback and programmability to the continuum.

Here, programmability is not used merely as a metaphor for nature’s creativity; it is a proposed name for how that creativity becomes operationally legible:

Nature’s creativity made explicit.

10. Creed¶

Continuity first; discreteness is how limits appear.

Feedback is the grammar of being.

Programmability is nature’s way of changing its mind without breaking its laws.

Time is recursion felt locally.

Stability is coherence that learned to last.

Truth is what survives revision.

11. The Compiler Cosmos¶

The universe can be understood as computing itself not in bits but in unbroken flow: the unbroken recursion of relation becoming form. Our bits are local encodings of that flow, constrained by the syntax of our tools, and the digital and the physical are descriptive conveniences, not separate realms.

Each gesture of code, each transfer of energy, each branching of a galaxy instantiates the same invariant operation:

- Information in motion,

- Rules in feedback,

- Reality programming itself.

In that light, physics and computation no longer stand apart but become complementary languages through which the universe becomes intelligible to itself.

The universe programs itself.

CCT Philosophical Essay - Lay Guide¶

[This document develops the ontological story. The formal operators, estimators, and experiments can be found in the preprint.]

0. Orientation:¶

Physics is very good at describing patterns: equations that keep working. CCT starts one level earlier: what can a finite observer reliably measure and control?

Every real observer is finite. Sensors have bandwidth limits. Controllers have latency. Experiments have noise floors.

So we ask a more operational question than "what are the laws?": What stays the same when you change how you measure it?

We call this program the Continuum Computation Thesis (CCT): the idea that reality may behave like a continuous, rule-based process.

"Computation" here does not mean "bits at the bottom." It means that the world transforms states into states in a structured, lawful way.

In CCT, discreteness is treated as what you see when you measure something continuous with limited bandwidth, not an assumption about what reality is made of.

Three Layers (and Why This Isn't One Big Claim)¶

CCT is intentionally stratified into layers so the speculative part cannot hide inside the practical part:

- Layer 1 - Model theorems (rigorous in toy worlds). In explicit model classes (finite-state, bandwidth-limited observers/controllers), you can prove tradeoffs.

- Layer 2 - Engineering regime (testable in lab systems). The same tradeoffs should show up in real instruments and controllable media. This is where CCT Labs lives: if predictions fail here, CCT fails in that regime.

- Layer 3 - Meta-law conjecture (speculative, gated). The bold possibility: what we call "laws" are stable habits (attractors) in a larger space of possible rules. This is only pursued if Layers 1-2 validate the methodology.

Key Terms (Quick, Non-Technical)¶

- Instrument as compiler: a measurement tool that turns smooth changes into readable outputs (counts, pixels, clicks, continuous signals).

- Bandwidth (B): how much task-relevant information your observer can pull per unit time (a throughput limit).

- Rule-space (R): a conceptual "landscape of possible effective laws." Stable regions look like familiar physics.

- Feedback grammar: a small set of recurring feedback moves (coupling, delay, amplification, damping) that shape stability and structure.

- Programmability: how much you can steer a system's behavior per unit resource.

- Retunability: the possibility that effective parameters can shift under deep feedback while remaining coherent.

1. The Mirage of the Digital-Physical Split¶

We often talk as if there is a "digital world" (bits, code) and a "physical world" (matter, fields). CCT treats that split as mostly an artifact of how we measure.

1.1 Instruments as Compilers¶

An instrument is not a window onto "the thing itself." It is a translation device with constraints.

- A camera turns continuous electromagnetic fields into pixels and counts.

- A particle detector turns continuous fields into discrete "clicks."

- A CPU turns analog voltages into stable bit patterns by enforcing thresholds and timing.

In CCT language, instruments compile the same continuous reality into different report formats. Change the compiler (bandwidth, timing, estimator, feedback), and the "world" you report can change.

1.2 Discreteness as Finite-Bandwidth Projection¶

If you only take one idea from the philosophical essay, take this:

Measurement limits can make a continuous process look discrete.

This is not mystical; it's ordinary engineering. A low-bandwidth recording makes speech sound blocky and quantized. A higher-bandwidth recording recovers smoothness.

The scene did not change. The channel changed.

Example: A camera's readout is discrete photon counts. A gravitational-wave detector's readout is continuous strain. Different measurement chains, different apparent discreteness.

1.3 Shift Map (What Changes if You Take This Seriously)¶

If discreteness can be a projection, then a few conceptual habits shift:

- From states to transformations (what changes, not just what "is").

- From measurement as mirror to measurement as participation (your tools shape what you can reliably see).

- From control over a passive object to co-tuning with a responsive system (feedback matters).

- From "laws are fixed axioms" to "laws are the stable regularities that survive finite observation and control."

1.4 What This Isn't¶

CCT is often misread as one of these; it isn't:

- Not digital physics. We do not claim bits are fundamental. We treat bits/clicks as finite-measurement outputs.

- Not pancomputationalism (as a blank slogan). "Everything computes" is too vague. CCT is about measurable constraints.

- Not a replacement for GR/QM/SM. Existing physics remains effective, extraordinarily accurate in its regimes.

2. Rule-Space: Where "Laws" Live (As a Useful Picture)¶

Rule-space is a way of making a subtle idea concrete:

- Imagine every possible set of effective rules (ways the world could update) as points in a huge abstract space.

- Many points are unstable nonsense.

- Some points are stable: once you're near them, dynamics and feedback keep you near them.

Those stable regions are what we experience as "laws."

This is a conjectural picture, but it leads to a practical posture: when we build instruments and controllers, we are not only observing states; we are probing which regularities remain stable under different bandwidth and feedback conditions.

2.1 Rule-Space as Landscape (Gated Analogy)¶

Think of rule-space like a landscape of hills and valleys:

- Each point on the landscape is a different set of "rules" for how things work.

- Most points are unstable nonsense - like balancing on a knife-edge hill.

- But some points are valleys: stable places where, once you're there, you tend to stay there.

Those valleys are what we experience as "the laws of physics."

Here's the key insight: current laws feel permanent because we're in a stable valley. If retunability exists (Phase 3 speculation), deep feedback might move you between valleys under constraints - but this is not established; it's a testable possibility.

Example: Imagine a ball rolling on a rubber sheet. The valleys are where it naturally settles. If you could somehow stretch or tilt the sheet (extreme feedback), new valleys might form and old ones might shift. The ball hasn't broken the law of "roll downhill" - but which hill counts as "down" has changed.

This is a picture, not a proof. But it makes the idea concrete: Effective laws may be stable configurations in a larger space of possibilities, not axioms.

3. The Continuum (What "Continuous" Means Here)¶

When CCT says "continuum," it does not mean a hidden material ether. It means: reality is treated as an ongoing, connected process of relations and feedback, and discrete reports are what finite observers extract from that process.

Put simply:

- The world can evolve continuously.

- Observers record it in discrete symbols because observers have finite channels.

3.1 Computation Beyond the Turing Cage¶

Most people hear "computation" and think "digital computers." CCT uses a broader (but still concrete) meaning:

- Turing-style computation: stepwise symbols, clocked updates, strings of bits.

- Dynamical computation: continuous state evolution that implements rule-based transformation in a physical medium.

In this broader sense, fluids, fields, tissues, and networks all "compute" in the same way a storm "computes": they evolve under constraints, and those constraints can be measured and sometimes steered.

4. Information and Feedback: How the Continuum Coheres¶

Why does anything persist? Why doesn't a world of continuous flux wash everything out into noise?

CCT's answer centers two ideas:

- Information is relational. It's about how things connect and constrain each other, not just labels we put on them.

- Feedback stabilizes. Loops can maintain structure across time.

Engineers already know the basic "verbs" of feedback: amplify, damp, delay, couple, lock phase. The philosophical bet is that the same pattern of feedback moves repeats across domains and scales.

This is why CCT keeps asking an engineering question even in philosophy: how much steering per joule does this buy, and what bandwidth does it assume?

5. Programmability and Retunability (Scale-Invariant Steering)¶

Programmability is a working name for something engineers recognize immediately: some systems give you a lot of reliable steering for a little effort, and others don't.

5.1 What Is Programmability?¶

In CCT terms, programmability is steering capacity per resource.

More precisely: how much control do you get for the energy and time you put in? How efficiently can you nudge a system from one state to another, or retune how it behaves?

This isn't vague. It's measurable.

You can score it as: - Error reduction per control joule (in a lab system) - Bits of reliable steering per watt-hour (in a computational system) - Cell migration distance per unit metabolic energy (in a biological system)

The numbers will differ, but the shape of the tradeoff - between control, energy, bandwidth, and coherence - repeats.

5.2 Why Programmability Appears at Every Scale¶

The claim is not that the numbers are identical across scales. It's that the same kinds of constraints show up everywhere:

- Molecular: conformational switches in proteins; gene regulatory networks retuning expression.

- Cellular/tissue: signaling cascades; morphogen gradients steering development.

- Organism: neural plasticity reshaping behavior; immune systems updating their recognition patterns.

- Social/technical: institutions updating norms; algorithms being retrained; protocols refactored.

At every level, systems write and rewrite parts of their own operating conditions using feedback.

Scale invariance here means: The same basic tradeoffs - between control, flexibility, and staying organized - show up at every scale.

This suggests adaptive rule-spaces rather than frozen laws.

5.3 Retunability: The Outer Ring (Speculative)¶

Here's where it gets speculative.

Think of it as a two-ring picture:

- Inner ring: Laws are fixed; you vary states and controls. (Standard physics and engineering.)

- Outer ring (Phase 3 speculation): Under deep enough feedback, effective laws/parameters might shift while preserving coherence.

Retunability is the possibility that, with enough bandwidth and control, you might not just steer within the current set of rules - you might nudge which rules apply.

Example (hypothetical): Imagine a material where, under intense coherent driving, the effective interaction strength between particles shifts slightly. Not because the particles changed, but because the feedback environment changed how the particles interact.

This is Layer 3 speculation. We don't know if it's real. But if it exists, it should leave measurable signatures: anomalous scaling, shifted curves, reproducible deviations that survive control experiments.

Crucially: Rule changes aren't free. They're resource-bounded. You can change the game, but you still have to pay for the new board.

6. How We Test the Philosophy (Without Turning It Into Vibes)¶

The philosophical story only matters if it produces operational, falsifiable claims. CCT uses two main "handles" to make that happen, and sequences the tests to avoid epicycles.

6.1 Phase 1-2: Calibration (Essential First Step)¶

Before you can claim deviations, you have to prove the methodology works in known regimes.

Phase 1-2 asks: - Can we reproduce established results using RFH and \(\mathsf{Prog}_T\)? - Do our bandwidth, discreteness, and control metrics align with accepted physics? - Can we control for artifacts of how you measure (e.g., counting clicks vs. measuring smooth oscillations)?

What failure looks like: If RFH and \(\mathsf{Prog}_T\) don't organize known lab systems in a coherent way, CCT is wrong at the methodology level. No point proceeding to speculative claims if the tools don't work.

What success looks like: We get scaling laws, control-efficiency curves, and bandwidth-discreteness relationships that match (or at least don't contradict) standard physics. This validates the framework and establishes baselines.

Where we’re at right now (in plain terms): We’re building a set of worked examples where we take real data and ask a simple question: if we measure “harder,” does the graininess soften in a predictable way? Some cases are clean and instrument-like (LIGO, automotive radar). Others are messier, but still useful for checking that the method transfers (for example: paleomagnetic excursion records, market time series under coarse-graining, and a small public quantum-optics sweep).

6.1.1 What makes a CCT result count (constraint-complete, in plain language)¶

Most "big claims" die in the same place: the real world. Controllers have delay and limited response. Systems drift. Measurements are noisy. Calibrations overfit.

So CCT treats four ingredients as part of the claim, not as afterthoughts:

- Physical constraint 1: finite actuation response. What you command is not what the system instantly receives; delay and smoothing change the timing of influence.

- Physical constraint 2: drift/noise. Even "the same" run is never exactly the same; you have to generalize across conditions.

- Engineering lever 1: waveform control. Instead of a single step input, you use shaped waveforms (often a simple "kick then hold") with timing as a real knob.

- Engineering lever 2: robust estimation + calibration. You declare a finite-shot regime, report error bars, and test on holdout conditions; when cheap calibration becomes unreliable, you use a gated policy that pays for a more robust check only when needed.

6.2 RFH (Bandwidth -> Discreteness)¶

If discreteness is a finite-bandwidth projection, then increasing measurement bandwidth should often soften it, in a regime-local, measurable way.

One common signature is a scaling law of the form:

- \(\Delta\) is an effective error/discreteness measure (platform-specific).

- \(B\) is an effective bandwidth/throughput measure (platform-specific).

- \(\alpha\) is the slope on a log-log plot (a "zoom efficiency" for that regime).

In some systems you see smooth power-law behavior in declared regimes (RFH-PL). In others you see banded/stepped structure (RFH-QF). Either way, the claim is testable: you sweep bandwidth and see what the instrument reports.

6.3 \(\mathsf{Prog}_T\) (Steering per Joule)¶

If programmability is real, we should be able to score it. \(\mathsf{Prog}_T\) is an attempt to do that: how much reliable steering do you get per joule, over a time horizon \(T\)?

This forces honesty: you cannot claim "control" without paying for sensing, actuation, timing, and feedback.

Why it matters: It's easy to make vague claims about "control" or "programmability." \(\mathsf{Prog}_T\) makes you show the energy bill and demonstrate that your control actually persists over the declared time horizon.

6.4 Baby Theorems (Why This Isn't Goalpost Moving)¶

In Layer 1, CCT proves constraints inside explicit model classes. These "Baby Theorems" are not full physics, but they do something valuable: they carve out forbidden regions so the framework cannot be endlessly patched.

Example: no agent can steer reality faster than they can resolve it.

This is proven formally in toy models. If real systems routinely violate it (under declared assumptions), CCT is wrong in that regime.

6.5 Phase 3+: Deviation Detection (Gated by Phase 1-2)¶

Only after Phase 1-2 validates the methodology do we probe for deviations:

- Anomalous bandwidth-discreteness exponents

- \(\Delta x \cdot \Delta p\) signatures that differ from \(\hbar\) in controlled ways

- Light-cone deformations or regime-dependent "constant" shifts

If we detect reproducible, controlled anomalies that survive measurement-mode controls, we have evidence for the Core Conjecture (laws as emergent attractors, constants as regime parameters).

If we don't, that's also informative: it puts hard bounds on how deep retunability goes.

Key point: Phase 3 is gated. You don't get to claim new physics until you've proven the tools work in old physics.

This sequencing prevents epicycles.

7. How CCT Reinterprets Time and Constants (Layer 3 / Speculative)¶

Two familiar ideas look different through the CCT lens. These are interpretive reframings, not established results.

Time as feedback rhythm: In this view, time isn't a background stage. It's the ordering of feedback cycles - the rhythm by which changes happen and stabilize. Each cycle is a "now"; the sequence is what we call "time passing." The arrow of time (why we remember the past but not the future) arises from how information flows through those cycles.

Constants as stable settings: In standard physics, constants like c (speed of light), \(\hbar\) (Planck's constant), and G (gravitational constant) are treated as fixed. CCT reinterprets them as stable settings in rule-space:

- \(\hbar\) helps set the scale of the "graininess" that shows up when you measure something smooth with limited tools.

- c marks a bandwidth ceiling: the maximum rate at which coherent information propagates.

- G links mass/energy to spacetime curvature (how geometry responds to energy).

The speculative part: If these are stable settings rather than axioms, then under extreme feedback conditions they might shift slightly while remaining coherent (Phase 3+ territory). This is not asserted; it's a testable possibility gated by Phase 1-2 validation.

8. How Right Is This? A Smart Way to Hold the Claim¶

If you want a layperson's "credence structure," it looks like this:

- High confidence: finite observers face bandwidth/energy tradeoffs; measurement is never free; control has a bill.

- Medium confidence: these tradeoffs organize real lab platforms in a cross-domain way (calibration is the hard part).

- Low (but interesting) confidence: some effective regularities we call "laws" behave like attractors in rule-space, and may be retunable in extreme regimes (Phase 3+ only).

The practical stance is simple: you do not have to buy the ontology to use the tools. You can just check whether the declared scaling, bands, and control-per-joule results replicate.

9. Tight Summary¶

- Reality can be treated as a continuous, rule-based process.

- Finite instruments compile that continuum into discrete reports; some discreteness may be measurement-induced.

- "Laws" can be operationalized as the regularities that remain stable across observer limits.

- Feedback is a main organizer of structure; programmability measures steering per resource.

- RFH and \(\mathsf{Prog}_T\) are the main test handles; Baby Theorems constrain what is allowed.

- Phase 1-2 is calibration inside known physics; deeper claims are gated by replication.

.