CCT Labs: A Reference Lab for Programmable Physics¶

Two Big Problems, One Root Cause¶

AI training uses massive amounts of power — frontier models cost hundreds of millions of dollars, mostly electricity turned into heat.

Spaceflight is still extremely expensive because rockets need exponentially more fuel for each bit of extra performance.

Both problems come from the same place: trying to do ambitious things on hardware that fights the laws of physics instead of working with them.

The Mindset: Let Physics Do the Work¶

If you drop sand on a table, it spreads out and stops without thinking. That's not random — it's nature finding the most stable arrangement. Physics is constantly doing this — it's called "settling." The key insight: settling is a form of computation.

For AI, the trick is devices that settle into answers rather than calculating them step by step. Instead of computing a solution, you encode a problem into a physical system, let it relax to equilibrium, and read out the answer.

For spaceflight, the trick is infrastructure that does the pushing instead of onboard fuel. Ground stations and orbiting relays use light and electromagnetic fields to accelerate, steer, and stabilize vehicles from outside — the spacecraft gets lighter, and the mission gets cheaper.

CCT Labs is building the math and designing the hardware to measure and exploit both. We're not fighting physics. We're learning to let it solve problems for us.

One Stack, Two Frontiers¶

The surprising part: The same basic lab setup used to test "settling" for AI is exactly what’s needed to test beam steering and stability for space.

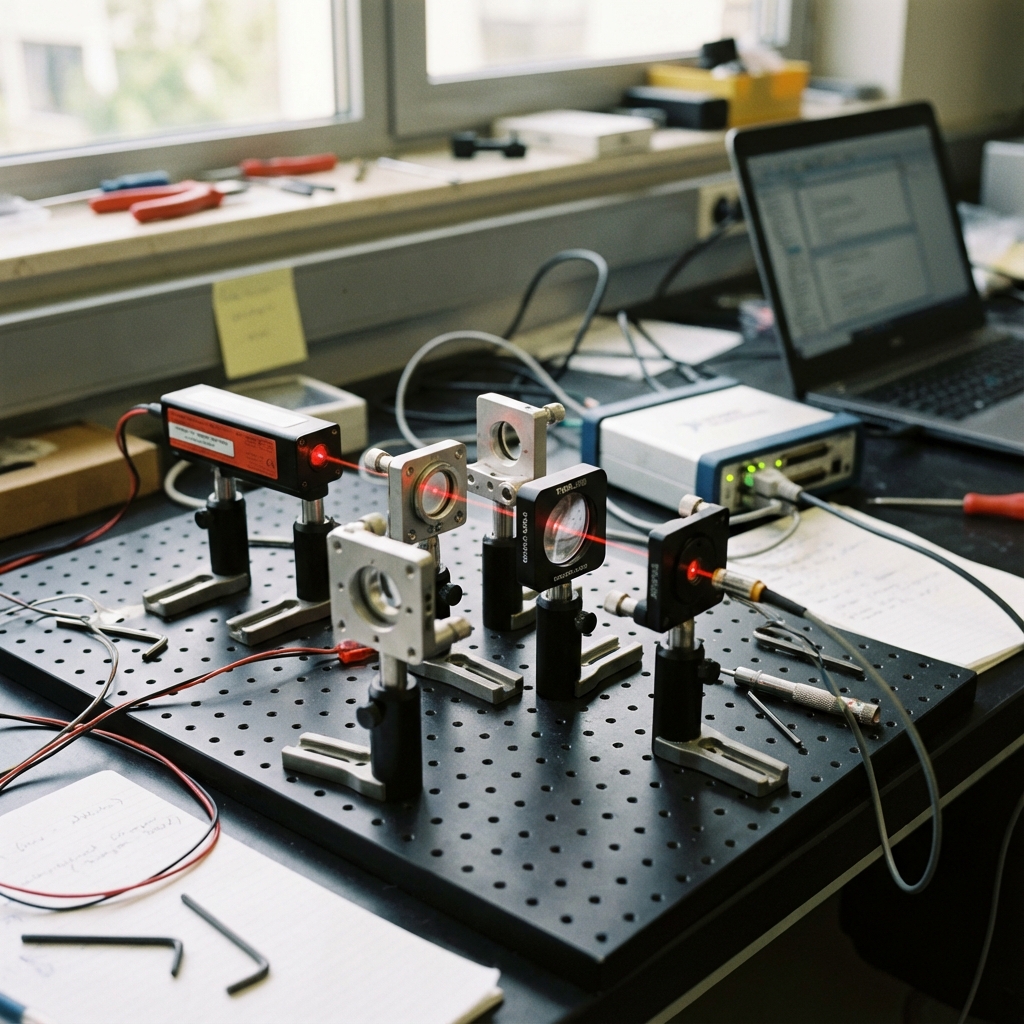

This is because the core hardware stack is the same. Whether we're testing how a device "settles" into an answer or testing how precisely we can aim a beam, we're using the same tools: precise optics, fast feedback, and careful measurement.

We're agnostic about whether we're "reprogramming fundamental physics" or just "getting extremely good at using known physics." The tests don't change either way. What matters is that the tools are the same — and those tools have their highest leverage in space.

What We'll Build First (Year 1)¶

CCT Labs is a lab-first effort: we make ideas real by forcing them into hardware you can measure.

In the first 12 months, we're building three things:

-

A photonic reference bench — optical hardware that reproduces the "response bands" (specific settings where the device becomes unusually controllable) we predicted in simulation. This proves our predictions translate to real devices.

-

A field-control bench — think of it like magnetic levitation, but more precise. Electromagnetic antennas create invisible "pockets" that hold and position test objects. This is the tabletop version of formation control for space.

-

A Prog_T energy ledger — a published recipe for measuring "how much control do you get per joule?" so anyone can compare their results to ours.

Success (in plain terms) means:

- We can steer the system (small input → predictable, repeatable output),

- We can measure its behavior with error bars,

- And the trends we see in hardware match the predictions from simulation (within tolerances).

Important: Year 1 is de-risking, not victory laps. We are not claiming “we solved AI training efficiency” or “we solved space propulsion.” We are measuring whether the underlying physics is controllable and scalable enough to be worth building on.

Two things make these tests harder than they sound:

- Control isn't instantaneous. Real actuators have delay and limited response (what you command gets smeared and arrives late).

- The world drifts. Real devices fluctuate shot-to-shot and change over time, so a calibration that "works once" can fail elsewhere.

And two things make them workable in practice:

- Waveform control. Instead of a single step input, we use shaped waveforms (often a simple "kick then hold") and treat timing as a real knob.

- Robust measurement + calibration. We declare shot budgets, report error bars, and test on conditions we didn't tune on; when cheap calibration becomes unreliable, we use a gated policy that pays for a more robust check only when needed.

Two Tools to Measure "Is This Actually Usable?"¶

RFH: Does more measurement effort help?¶

You can always spend more on measuring — more sensors, more time, more data. But does it pay off?

RFH tells you: sometimes that extra effort pays off quickly (the signal is clean), and sometimes it pays off slowly (you're just averaging noise). In coherent systems like lasers, doubling your effort roughly halves your uncertainty. In noisy systems, you need four times the effort to get that same halving.

So RFH is a "where is the easy win?" detector.

Plain-English translation: RFH answers: “If we try harder to measure this thing, do we get meaningfully better results — or are we mostly just averaging noise?”

Prog_T: How much control per joule?¶

If you put 1 joule of energy into a system, how much useful control do you actually get back?

A clumsy system gives you small, noisy changes for each joule. A well-chosen system gives you large, precise changes. Prog_T measures this: how much steering per unit of energy?

Together, RFH and Prog_T tell you whether a physical device is worth building — or just a demo that won't scale.

Plain-English translation: Prog_T answers: “How much reliable steering do we get for the energy we spend?” It’s our way of separating impressive demos from systems that could scale.

What We’ve Already Seen¶

We've tested RFH on real-world data to see if the scaling patterns hold. The key number is α (alpha) — it measures how fast your precision improves as you invest more effort. Higher α means cleaner signal; lower α means you're fighting noise.

- Gravitational wave detectors (LIGO): α ≈ 0.99 — coherent regime, measurement improves fast

- Camera sensor noise: α ≈ 0.50 — incoherent regime, just averaging noise

- Automotive radar odometry: α ≈ 0.99 — coherent navigation

- ECG heart signals: α ≈ 0.5 — incoherent physiological averaging

- Bioelectric tissue models: α ≈ 0.35 — sub-incoherent, correlated noise

- Lattice simulations ("Cold Melt"): A simulation showing coherence is programmable via field structure — applying structured resonant fields shifts the same material from incoherent to coherent behavior. We saw ~3× efficiency improvement vs. just heating it up.

The same measurement-scaling law appears across completely different domains. That's not proof of universality, but it shows the approach touches reality.

In Simulation: The Predicted Configuration¶

We built a simulated optical device — think carefully shaped glass that bends light in a special way — and found:

- It has a few "good bands" where it becomes unusually coherent and controllable

- One setting (the predicted configuration) shows 4.9× gain (getting ~5× more useful control from the same power) and ~88% coherence — where systems are most controllable

- We wrote predictions in advance and confirmed the device behaves as expected

This gives us confidence the theory makes real, testable predictions — not just nice stories.

AI: Calibration Domain¶

AI is our near-term calibration domain — it gives fast feedback loops for testing the methodology.

Today's AI training is a massive energy-to-heat pipeline: compute a loss, compute gradients, update parameters, repeat millions of times. Analog computing has been tried before, but devices drift, vary unit-to-unit, and pick up noise.

Our bet is the missing piece is measurement. If you can't reliably measure what your device is doing, you can't control it. If you can't control it, you can't program it.

Why we use AI as a testbed: The photonic bench lets us validate RFH and Prog_T quickly — we can run experiments, get results, and iterate in weeks instead of months.

Space: Where We Want to Focus Long-Term¶

Space is our primary goal. If programmable field coherence works as predicted, it's the domain with highest leverage for humanity.

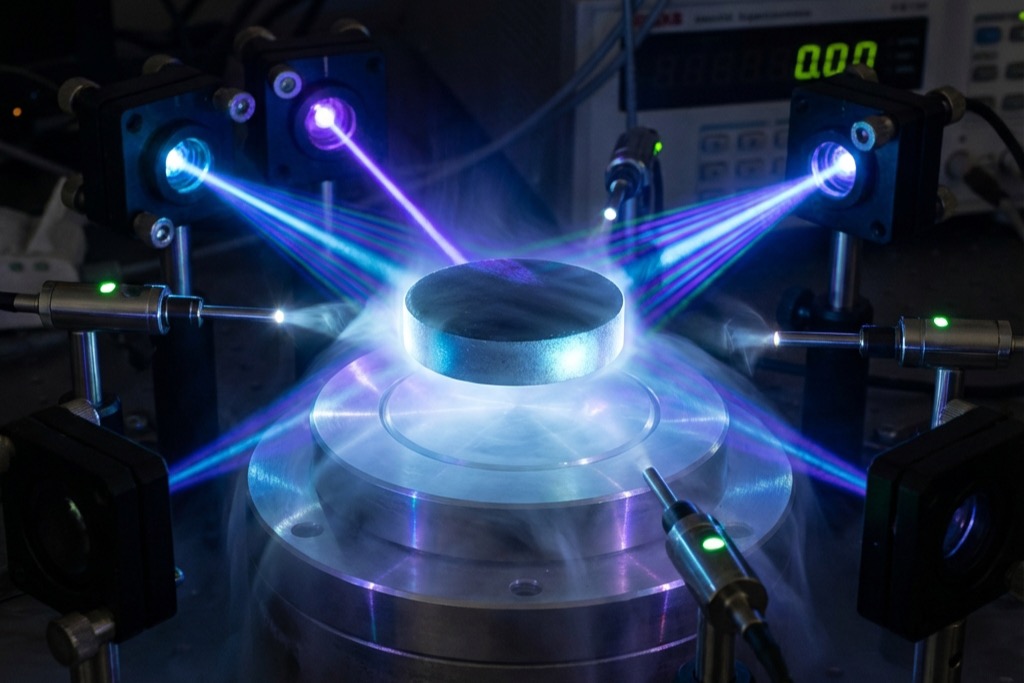

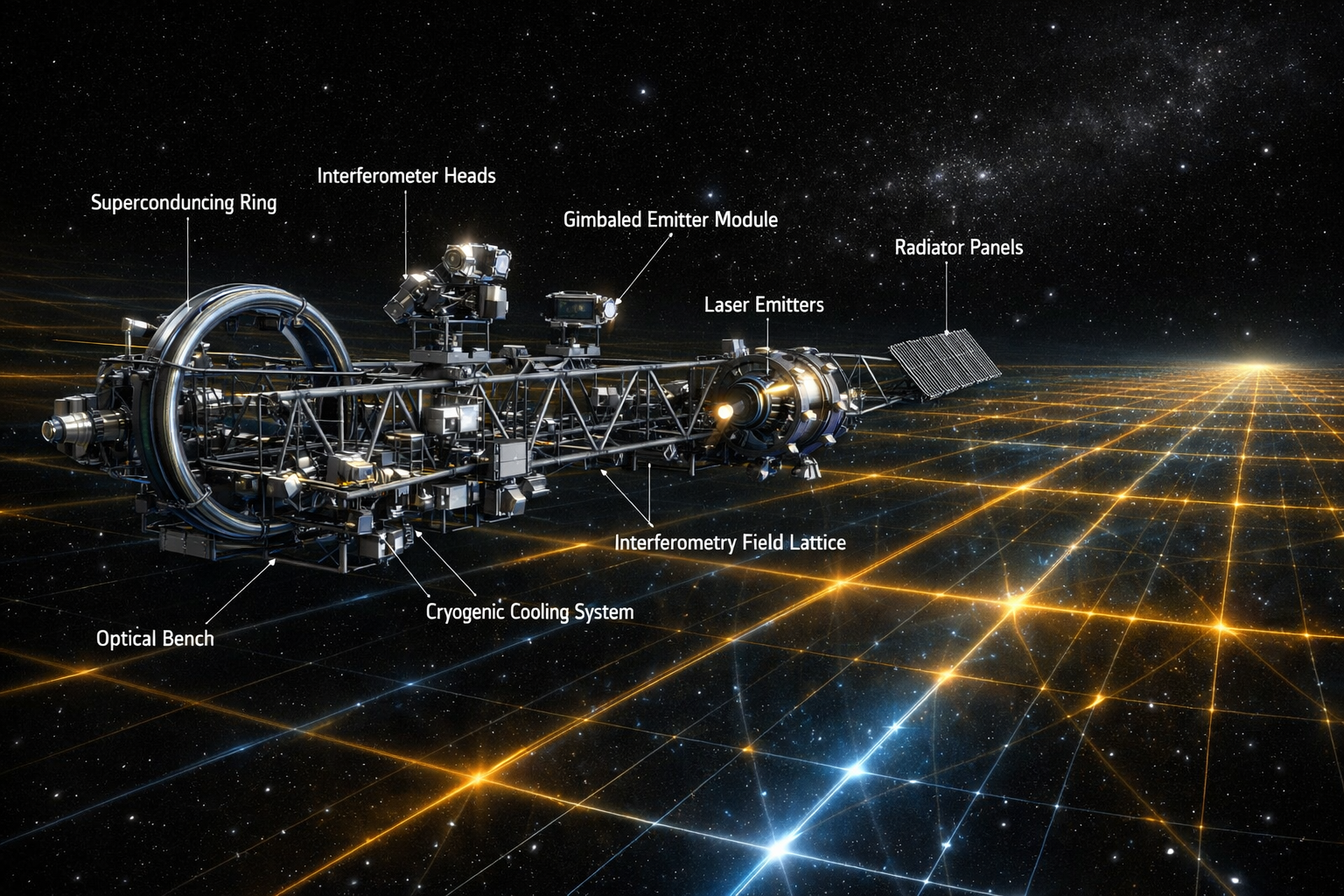

The same measurement framework applies here. Instead of burning fuel, you let electromagnetic fields do the positioning and control. Ground stations and relays create invisible "pockets" that hold, guide, and stabilize vehicles — like magnetic levitation at a distance.

We've found ways to use fields for structural control that work better than you'd expect. The physics is known (electromagnetic fields, standing waves); the engineering is new. The rocket doesn't have to carry fuel for what the infrastructure provides.

We validate this in steps (each one builds on the last):

-

Phase 1 (Field): Build a tabletop electromagnetic setup that holds and positions test objects using field pockets. Measure how efficiently we can hold and move things.

-

Phase 2 (Matter): Use carefully tuned light to flip a material (vanadium dioxide) from insulator to metal — more efficiently than just heating it up. If this works, it proves coherent driving beats brute-force energy.

-

Phase 3 (Stretch): Try the same trick on a superconductor. This is harder and only happens if Phase 2 works.

-

Phase 4 (Year 2+): If Phases 1-3 succeed, we look for subtler effects — does extreme coherence affect signal propagation? We'd measure whether tightly controlled fields produce any detectable timing or phase shifts beyond standard predictions.

Near term: Build the Earth-version of the stack — tracking, field shaping, feedback stability.

Long term: That becomes the foundation for beam-assisted missions and cislunar (Earth-Moon region) infrastructure — lighter spacecraft, field-based positioning, an "external infrastructure" model for space.

Biological Systems¶

Living tissues control their own growth and repair through tiny electrical signals — like a slow, distributed version of how nerves work, but across whole organs. Some researchers think this is how a salamander knows to regrow a leg, not just heal a wound.

Our tools (RFH and Prog_T) can measure "how controllable is this tissue?" We provide the math to partner labs doing experiments; we don't run the biology ourselves.

How We're Focusing¶

Primary focus: Space. If programmable field coherence works, space is the domain with highest leverage — lighter spacecraft, field-based positioning, infrastructure that does the pushing.

Calibration domains: AI and Biology. These give us fast feedback loops to test and validate the methodology. AI (photonic bench) lets us iterate in weeks; Biology (partner labs) extends the tools into living systems. We're not building AI chips or running bio labs — we're proving the measurement framework works.

Beyond CCT Labs: The same methodology could extend to robotics, medical devices, industrial sensing, and energy systems — any field where precise measurement and control of physical systems matters. These are downstream possibilities across many fields, not our current projects.

The 3 gaps we're looking to resolve in Year 1: (1) a precise, hardware-measurable definition of "coherence," (2) a full energy breakdown for Prog_T, and (3) the first AI benchmark task. Until these are locked down, we're de-risking — not claiming victory.

The Next Step¶

To build and test real lab hardware, and turn the math and tools into a method other groups can also use.

We run a loop: Theory → Simulation → Hardware → back to theory. That's how we avoid becoming "string theory vibes" — we keep forcing the ideas into devices you can measure.